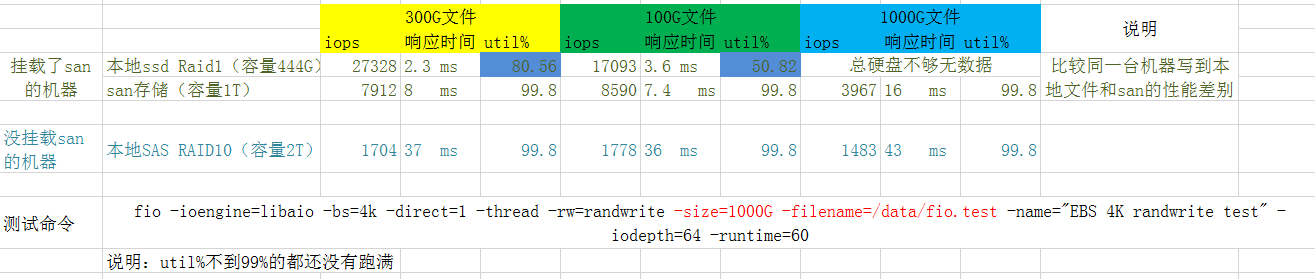

ssd/san/sas 磁盘 光纤性能比较

正好有机会用到一个san存储设备,跑了一把性能数据,记录一下

所使用的测试命令:

|

|

ssd(Solid State Drive)和san的比较是在同一台物理机上,所以排除了其他因素的干扰。

简要的结论:

本地ssd性能最好、sas机械盘(RAID10)性能最差

san存储走特定的光纤网络,不是走tcp的san(至少从网卡看不到san的流量),性能居中

从rt来看 ssd:san:sas 大概是 1:3:15

san比本地sas机械盘性能要好,这也许取决于san的网络传输性能和san存储中的设备(比如用的ssd而不是机械盘)

NVMe SSD 和 HDD的性能比较

表中性能差异比上面测试还要大,SSD 的随机 IO 延迟比传统硬盘快百倍以上,一般在微妙级别;IO 带宽也高很多倍,可以达到每秒几个 GB;随机 IOPS 更是快了上千倍,可以达到几十万。

HDD只有一个磁头,并发没有意义,但是SSD支持高并发写入读取。SSD没有磁头、不需要旋转,所以随机读取和顺序读取基本没有差别。

SSD 的性能特性和机制

SSD 的内部工作方式和 HDD 大相径庭,我们先了解几个概念。

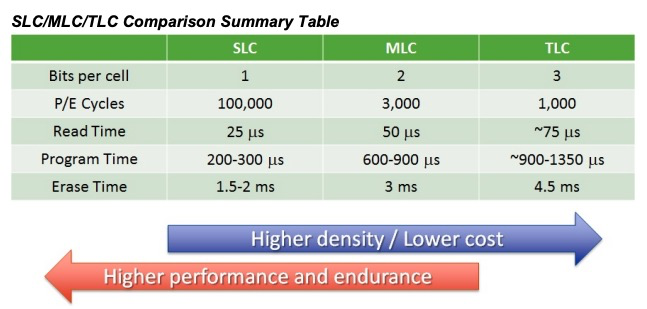

单元(Cell)、页面(Page)、块(Block)。当今的主流 SSD 是基于 NAND 的,它将数字位存储在单元中。每个 SSD 单元可以存储一位或多位。对单元的每次擦除都会降低单元的寿命,所以单元只能承受一定数量的擦除。单元存储的位数越多,制造成本就越少,SSD 的容量也就越大,但是耐久性(擦除次数)也会降低。

一个页面包括很多单元,典型的页面大小是 4KB,页面也是要读写的最小存储单元。SSD 上没有“重写”操作,不像 HDD 可以直接对任何字节重写覆盖。一个页面一旦写入内容后就不能进行部分重写,必须和其它相邻页面一起被整体擦除重置。

多个页面组合成块。一个块的典型大小为 512KB 或 1MB,也就是大约 128 或 256 页。块是擦除的基本单位,每次擦除都是整个块内的所有页面都被重置。

擦除速度相对很慢,通常为几毫秒。所以对同步的 IO,发出 IO 的应用程序可能会因为块的擦除,而经历很大的写入延迟。为了尽量地减少这样的场景,保持空闲块的阈值对于快速的写响应是很有必要的。SSD 的垃圾回收(GC)的目的就在于此。GC 可以回收用过的块,这样可以确保以后的页写入可以快速分配到一个全新的页。

SSD原理

对于 SSD 硬盘,类似SRAM(CPU cache)它是由一个电容加上一个电压计组合在一起,记录了一个或者多个比特。能够记录一个比特很容易理解。给电容里面充上电有电压的时候就是 1,给电容放电里面没有电就是 0。采用这样方式存储数据的 SSD 硬盘,我们一般称之为使用了 SLC 的颗粒,全称是 Single-Level Cell,也就是一个存储单元中只有一位数据。

但是,这样的方式会遇到和 CPU Cache 类似的问题,那就是,同样的面积下,能够存放下的元器件是有限的。如果只用 SLC,我们就会遇到,存储容量上不去,并且价格下不来的问题。于是呢,硬件工程师们就陆续发明了 MLC(Multi-Level Cell)、TLC(Triple-Level Cell)以及 QLC(Quad-Level Cell),也就是能在一个电容里面存下 2 个、3 个乃至 4 个比特。

只有一个电容,我们怎么能够表示更多的比特呢?别忘了,这里我们还有一个电压计。4 个比特一共可以从 0000-1111 表示 16 个不同的数。那么,如果我们能往电容里面充电的时候,充上 15 个不同的电压,并且我们电压计能够区分出这 15 个不同的电压。加上电容被放空代表的 0,就能够代表从 0000-1111 这样 4 个比特了。

不过,要想表示 15 个不同的电压,充电和读取的时候,对于精度的要求就会更高。这会导致充电和读取的时候都更慢,所以 QLC 的 SSD 的读写速度,要比 SLC 的慢上好几倍。

SSD对碎片很敏感,类似JVM的内存碎片需要整理,碎片整理就带来了写入放大。也就是写入空间不够的时候需要先进行碎片整理、搬运,这样写入的数据更大了。

SSD寿命:以Intel 335为例再来算一下,BT用户可以用600TB × 1024 / 843 = 728天,普通用户可以用600TB/2 = 300年!情况十分乐观

两种逻辑门

NAND(NOT-AND) gate

NOR(NOT-OR) gate

如上两种门实现的介质都是非易失存储介质,在写入前都需要擦除。实际上NOR Flash的一个bit可以从1变成0,而要从0变1就要擦除整块。NAND flash都需要擦除。

| NAND Flash | NOR Flash | |

|---|---|---|

| 芯片容量 | <32GBit | <1GBit |

| 访问方式 | 块读写(顺序读写) | 随机读写 |

| 接口方式 | 任意I/O口 | 特定完整存储器接口 |

| 读写性能 | 读取快(顺序读) 写入快 擦除快(可按块擦除) | 读取快(RAM方式) 写入慢 檫除很慢 |

| 使用寿命 | 百万次 | 十万次 |

| 价格 | 低廉 | 高昂 |

NAND Flash更适合在各类需要大数据的设备中使用,如U盘、SSD、各种存储卡、MP3播放器等,而NOR Flash更适合用在高性能的工业产品中。

高端SSD会选取MLC(Multi-Level Cell)甚至SLC(Single-Level Cell),低端SSD则选取 TLC(Triple-Level Cell)。SD卡一般选取 TLC(Triple-Level Cell)

NOR FLash主要用于:Bios、机顶盒,大小一般是1-32MB

为什么断电后SSD不丢数据

SSD的存储硬件都是NAND Flash。实现原理和通过改变电压,让电子进入绝缘层的浮栅(Floating Gate)内。断电之后,电子仍然在FG里面。但是如果长时间不通电,比如几年,仍然可能会丢数据。所以换句话说,SSD的确也不适合作为冷数据备份。

比如标准要求SSD:温度在30度的情况下,数据要能保持52周。

写入放大(Write Amplification, or WA)

这是 SSD 相对于 HDD 的一个缺点,即实际写入 SSD 的物理数据量,有可能是应用层写入数据量的多倍。一方面,页级别的写入需要移动已有的数据来腾空页面。另一方面,GC 的操作也会移动用户数据来进行块级别的擦除。所以对 SSD 真正的写操作的数据可能比实际写的数据量大,这就是写入放大。一块 SSD 只能进行有限的擦除次数,也称为编程 / 擦除(P/E)周期,所以写入放大效用会缩短 SSD 的寿命。

SSD 的读取和写入的基本单位,不是一个比特(bit)或者一个字节(byte),而是一个页(Page)。SSD 的擦除单位就更夸张了,我们不仅不能按照比特或者字节来擦除,连按照页来擦除都不行,我们必须按照块来擦除。

SLC 的芯片,可以擦除的次数大概在 10 万次,MLC 就在 1 万次左右,而 TLC 和 QLC 就只在几千次了。这也是为什么,你去购买 SSD 硬盘,会看到同样的容量的价格差别很大,因为它们的芯片颗粒和寿命完全不一样。

耗损平衡 (Wear Leveling)

对每一个块而言,一旦达到最大数量,该块就会死亡。对于 SLC 块,P/E 周期的典型数目是十万次;对于 MLC 块,P/E 周期的数目是一万;而对于 TLC 块,则可能是几千。为了确保 SSD 的容量和性能,我们需要在擦除次数上保持平衡,SSD 控制器具有这种“耗损平衡”机制可以实现这一目标。在损耗平衡期间,数据在各个块之间移动,以实现均衡的损耗,这种机制也会对前面讲的写入放大推波助澜。

磁盘类型查看

|

|

fio测试

以下是两块测试的SSD磁盘测试前的基本情况

|

|

NVMe SSD测试数据

对一块ssd进行如下测试(挂载在/data 目录)

|

|

slat (usec): min=4, max=6154, avg=48.82, stdev=56.38: The first latency metric you’ll see is the ‘slat’ or submission latency. It is pretty much what it sounds like, meaning “how long did it take to submit this IO to the kernel for processing?”

如上测试iops为:18944,测试期间的iostat,测试中一直有mysql在导入数据,所以测试开始前util就已经100%了,并且w/s到了13K左右

|

|

从iostat看出,测试开始前util已经100%(因为ssd,util失去参考意义),w/s 13K左右,压力跑起来后w/s能到30K,svctm、await均保持稳定

SSD的direct和buffered似乎很奇怪,应该是direct=0性能更好,实际不是这样,这里还需要找资料求证下

direct``=boolIf value is true, use non-buffered I/O. This is usually O_DIRECT. Note that OpenBSD and ZFS on Solaris don’t support direct I/O. On Windows the synchronous ioengines don’t support direct I/O. Default: false.

buffered``=boolIf value is true, use buffered I/O. This is the opposite of the

directoption. Defaults to true.

如下测试中direct=1和direct=0的write avg iops分别为42K、16K

|

|

SATA SSD测试数据

|

|

-direct=0 -buffered=0读写iops分别为15.8K、6.8K 比ssd差了不少(都是direct=0),如果direct、buffered都是1的话,ESSD性能很差,读写iops分别为4312、1852

|

|

ESSD磁盘测试数据

这是一块虚拟的阿里云网络盘,不能算完整意义的SSD(承诺IOPS 4200),数据仅供参考,磁盘概况:

|

|

测试数据:

|

|

测试数据总结

| -direct=1 -buffered=1 | -direct=1 -buffered=0 | -direct=0 -buffered=0 | -direct=0 -buffered=1 | |

|---|---|---|---|---|

| NVMe SSD | R=10.6k W=4544 | R=99.8K W=42.8K | R=38.6k W=16.5k | R=10.8K W=4642 |

| SATA SSD | R=4312 W=1852 | R=16.9k W=7254 | R=15.8k W=6803 | R=5389 W=2314 |

| ESSD | R=2149 W=2150 | R=2462 W=2465 | R=2455 W=2458 | R=1987 W=1984 |

看起来,对于SSD如果buffered为1的话direct没啥用,如果buffered为0那么direct为1性能要好很多

SATA SSD的IOPS比NVMe性能差很多。

SATA SSD当-buffered=1参数下SATA SSD的latency在7-10us之间。

NVMe SSD以及SATA SSD当buffered=0的条件下latency均为2-3us, NVMe SSD latency参考文章第一个表格, 和本次NVMe测试结果一致.

ESSD的latency基本是13-16us。

以上NVMe SSD测试数据是在测试过程中还有mysql在全力导入数据的情况下,用fio测试所得。所以空闲情况下测试结果会更好。

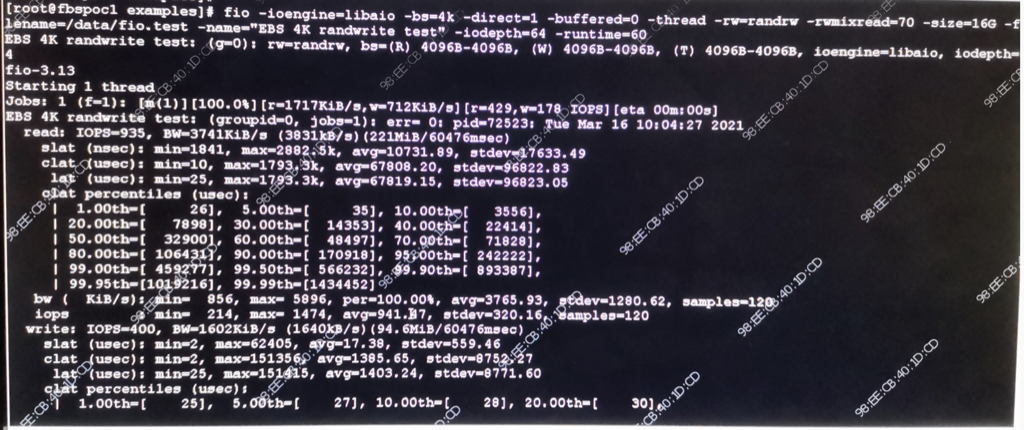

HDD性能测试数据

从上图可以看到这个磁盘的IOPS 读 935 写 400,读rt 10731nsec 大约10us, 写 17us。如果IOPS是1000的话,rt应该是1ms,实际比1ms小两个数量级,应该是cache、磁盘阵列在起作用。

SATA硬盘,10K转

万转机械硬盘组成RAID5阵列,在顺序条件最好的情况下,带宽可以达到1GB/s以上,平均延时也非常低,最低只有20多us。但是在随机IO的情况下,机械硬盘的短板就充分暴露了,零点几兆的带宽,将近5ms的延迟,IOPS只有200左右。其原因是因为

- 随机访问直接让RAID卡缓存成了个摆设

- 磁盘不能并行工作,因为我的机器RAID宽度Strip Size为128 KB

- 机械轴也得在各个磁道之间跳来跳去。

理解了磁盘顺序IO时候的几十M甚至一个GB的带宽,随机IO这个真的是太可怜了。

从上面的测试数据中我们看到了机械硬盘在顺序IO和随机IO下的巨大性能差异。在顺序IO情况下,磁盘是最擅长的顺序IO,再加上Raid卡缓存命中率也高。这时带宽表现有几十、几百M,最好条件下甚至能达到1GB。IOPS这时候能有2-3W左右。到了随机IO的情形下,机械轴也被逼的跳来跳去寻道,RAID卡缓存也失效了。带宽跌到了1MB以下,最低只有100K,IOPS也只有可怜巴巴的200左右。

网上测试数据参考

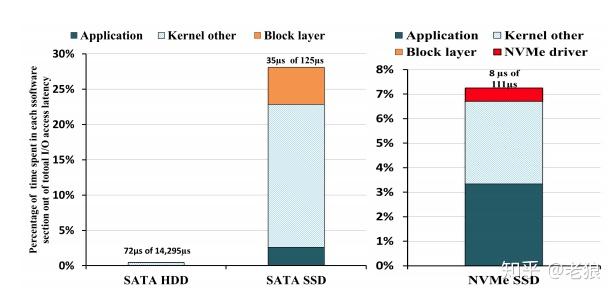

我们来一起看一下具体的数据。首先来看NVMe如何减小了协议栈本身的时间消耗,我们用blktrace工具来分析一组传输在应用程序层、操作系统层、驱动层和硬件层消耗的时间和占比,来了解AHCI和NVMe协议的性能区别:

硬盘HDD作为一个参考基准,它的时延是非常大的,达到14ms,而AHCI SATA为125us,NVMe为111us。我们从图中可以看出,NVMe相对AHCI,协议栈及之下所占用的时间比重明显减小,应用程序层面等待的时间占比很高,这是因为SSD物理硬盘速度不够快,导致应用空转。NVMe也为将来Optane硬盘这种低延迟介质的速度提高留下了广阔的空间。

rq_affinity

参考aliyun测试文档 , rq_affinity增加2的commit: git show 5757a6d76c

|

|

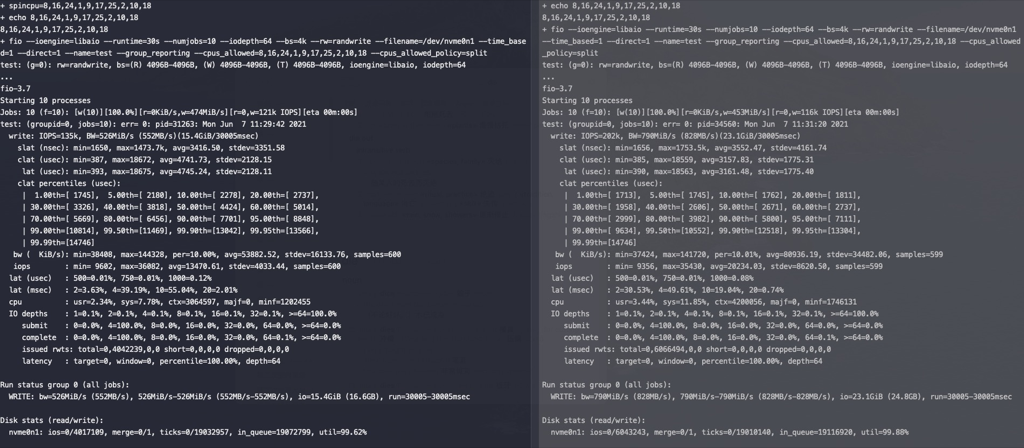

对NVME SSD进行测试,左边rq_affinity是2,右边rq_affinity为1,在这个测试参数下rq_affinity为1的性能要好(后许多次测试两者性能差不多)

LVM性能对比

磁盘信息

|

|

单块nvme SSD盘跑mysql server,运行sysbench导入测试数据

|

|

如果同样写lvm,由两块nvme组成

|

|

不知道为什么只有一个块ssd有流量,可能跟只写一个文件有关系

SSD中,SATA、m2、PCIE和NVME各有什么意义

高速信号协议

SAS,SATA,PCIe 这三个是同一个层面上的,模拟串行高速接口。

- SAS 对扩容比较友好,也支持双控双活。接上SAS RAID 卡,一般在阵列上用的比较多。

- SATA 对热插拔很友好,早先台式机装机市场的 SSD基本上都是SATA的,现在的 机械硬盘也是SATA接口居多。但速率上最高只能到 6Gb/s,上限 768MB/s左右,现在已经慢慢被pcie取代。

- PCIe 支持速率更高,也离CPU最近。很多设备 如 网卡,显卡也都走pcie接口,当然也有SSD。现在比较主流的是PCIe 3.0,8Gb/s 看起来好像也没比 SATA 高多少,但是 PCIe 支持多个LANE,每个LANE都是 8Gb/s,这样性能就倍数增加了。目前,SSD主流的是 PCIe 3.0x4 lane,性能可以做到 3500MB/s 左右。

传输层协议

SCSI,ATA,NVMe 都属于这一层。主要是定义命令集,数字逻辑层。

- SCSI 命令集 历史悠久,应用也很广泛。U盘,SAS 盘,还有手机上 UFS 之类很多设备都走的这个命令集。

- ATA 则只是跑在SATA 协议上

- NVMe 协议是有特意为 NAND 进行优化。相比于上面两者,效率更高。主要是跑在 PCIe 上的。当然,也有NVMe-MI,NVMe-of之类的。是个很好的传输层协议。

物理接口

M.2 , U.2 , AIC, NGFF 这些属于物理接口

像 M.2 可以是 SATA SSD 也可以是 NVMe(PCIe) SSD。金手指上有一个 SATA/PCIe 的选择信号,来区分两者。很多笔记本的M.2 接口也是同时支持两种类型的盘的。

M.2 , 主要用在 笔记本上,优点是体积小,缺点是散热不好。

U.2,主要用在 数据中心或者一些企业级用户,对热插拔需求高的地方。优点热插拔,散热也不错。一般主要是pcie ssd(也有sas ssd),受限于接口,最多只能是 pcie 4lane

AIC,企业,行业用户用的比较多。通常会支持pcie 4lane/8lane,带宽上限更高

数据总结

- 性能排序 NVMe SSD > SATA SSD > SAN > ESSD > HDD

- 本地ssd性能最好、sas机械盘(RAID10)性能最差

- san存储走特定的光纤网络,不是走tcp的san(至少从网卡看不到san的流量),性能居中

- 从rt来看 ssd:san:sas 大概是 1:3:15

- san比本地sas机械盘性能要好,这也许取决于san的网络传输性能和san存储中的设备(比如用的ssd而不是机械盘)

- NVMe SSD比SATA SSD快很多,latency更稳定

- 阿里云的云盘ESSD比本地SAS RAID10阵列性能还好

参考资料

http://cizixs.com/2017/01/03/how-slow-is-disk-and-network

https://tobert.github.io/post/2014-04-17-fio-output-explained.html